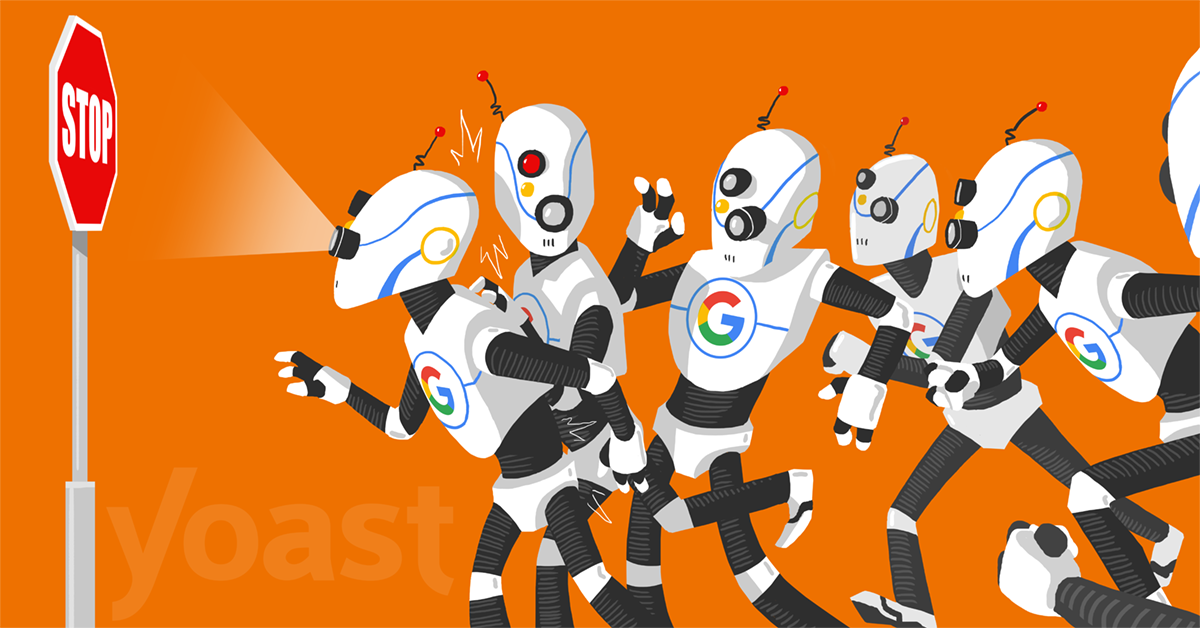

What is robots.txt?

Robots.txt is a text file webmasters create to instruct web robots (typically search engine robots) how to crawl pages on their website. The robots.txt file is part of the the robots exclusion protocol (REP), a group of web standards that regulate how robots crawl the web, access and index content, and serve that content up to users. The REP also includes directives like meta robots, as well as page-, subdirectory-, or site-wide instructions for how search engines should treat links (such as “follow” or “nofollow”).

What happens if you don’t have a robots.txt file?

If a robots.txt file is missing, search engine crawlers assume that all publicly available pages of the particular website can be crawled and added to their index.

What happens if the robots.txt is not well formatted?

It depends on the issue. If search engines cannot understand the contents of the file because it is misconfigured, they will still access the website and ignore whatever is in robots.txt.

How to create a robots.txt?

Creating a robots.txt file is easy. All you need is a text editor (like brackets or notepad) and access to your website’s files (via FTP or control panel).

Before getting into the process of creating a robots file, the first thing to do is to check if you already have one.

The easiest way to do this is to open a new browser window and navigate to https://www.yourdomain.com/robots.txt